Using Containers to Maintain Apps

When developing a software, you might want to make sure that your software runs reliably on any computing environment. In the process of creating a software, chances are, you might move your software to another computing environment. It could be from your own PC to a test environment, test to staging environment, staging to a production environment, or even from a physical machine into a virtual machine in cloud.

The problem is that not all environments are identical. Solomon Hykes, the founder and CTO of Docker says “You’re going to test using Python 2.7, and then it’s going to run on Python 3 in production and something weird will happen. Or you’ll rely on the behavior of a certain version of an SSL library and another one will be installed. You’ll run your tests on Debian and production is on Red Hat and all sorts of weird things happen.” And not just that, he added “The network topology might be different, or the security policies and storage might be different but the software has to run on it.”

Containers

A Container is basically an entire runtime environment consisting of the application, its dependencies, libraries, binaries, and configuration files needed to run it, bundled into one package. By bundling it into one, we don’t have to worry about the differences in software architectures. Therefore, we have the ability to reliably run our application in different environments.

Containers work best for applications that use the microservice architecture that break up the application into various services that are each bundled or packaged in separate containers. For organizations that stick to the practices of continuous integration and continuous delivery (CI/CD), containers offer a benefit of being scalable and short-lived instances of applications or services which are hosted in containers that can come and go by demand.

Other Benefits of using containers:

- Low memory size compared to virtual machines. A container might not even exceed a hundred megabytes in size whereas a virtual machine with its own operating system might be several gigabytes in size.

- Faster (or even immediate) boot and shutdown times. A container can even be started almost instantly while virtual machines might take minutes too boot up. That means that we can use our containers on-demand since we can start it immediately and make it disappear when no longer needed.

- Greater modularity. Using containers allows us to use the microservices approach. Rather than running a monolithic complex application, we can split our application into modules such as the database, the frontend, and so on.

Container Tools

Containers have a high impact towards high-availability computing, and there are many toolsets that will help you run services needed to manage them. Docker is the most popular followed by many others such as OKD, podman, rkt, openshift and others. For examples or documentations regarding each tools, you can check them out on their respective sites.

Container Orchestration

After all the benefits stated above, there is one problem when it comes to scalability. Let’s say you have 5 containers and 2 applications, you might not run into problems managing the deployment and maintenance of your containers. On the other hand, if you have 500 containers and 100 applications, you’ll eventually run into some problems and management becomes essential. This is where container orchestrations comes into play.

In general, container orchestration helps automate the deployment, management, scaling, and networking of containers which is basically their lifecycle. They can also automate and manage tasks such as:

- Provisioning and deployment

- Configuration and scheduling

- Resource allocation

- Container availability

- Scaling or removing containers based on balancing workloads across your infrastructure

- Load balancing and traffic routing

- Monitoring container health

- Configuring applications based on the container in which they will run

- Keeping interactions between containers secure

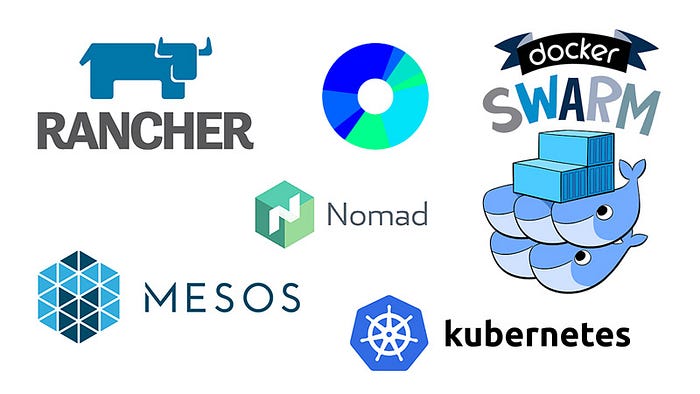

There are various tools to choose from that help you orchestrate containers such as Kubernetes, Docker Swarm, and Apache Mesos. Each has its plus and minus but they all do one common thing which is manage containers.

How Container Orchestration Works

One of the most important parts of orchestration is to describe the configuration of an application using either a YAML or JSON file. The configuration file tells the configuration management tool where to find the container images, how to establish a network, and where to store the logs.

When deploying a new container, the container management tool automatically schedules the deployment to a cluster and finds the right host while also taking into account any defined requirements or restrictions. The orchestration too then manages the container’s lifecycle based on the specifications that were determined in the compose file.

Our Project

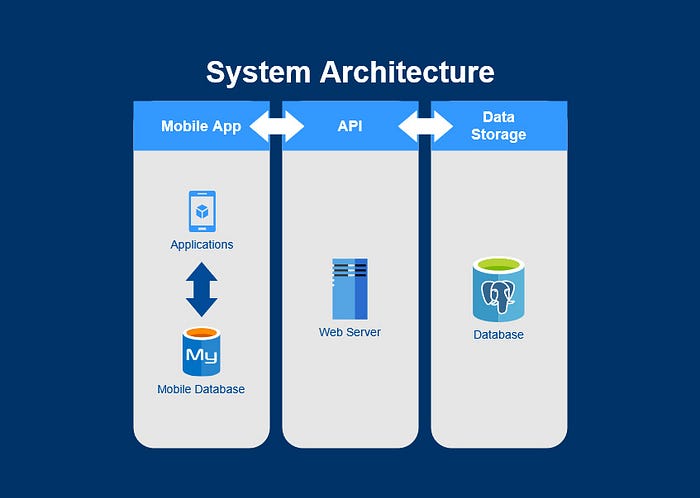

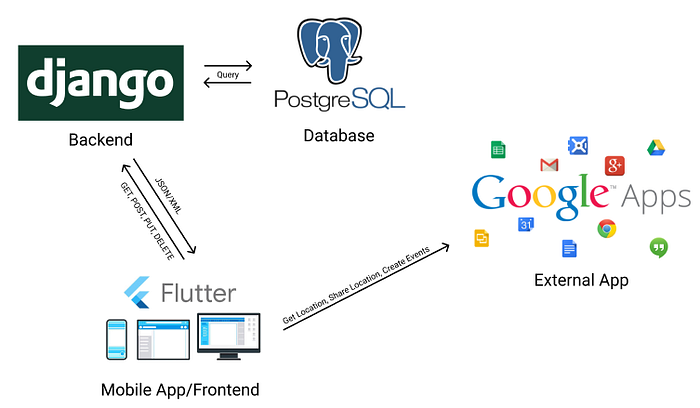

Currently, I am working on a project for a client for the Proyek Perangkat Lunak (in english, Software Project) class. I have created a simple system architecture of our application. Its the traditional frontend, backend, and database architecture. Here’s an illustration below.

So we have the mobile app as the frontend which consists of the application itself which also utilizes the phone storage to store some data locally. The backend consists of a Django-based web server which functions as an API for our mobile application to request data to. And the data storage will be a PostgreSQL-based database.

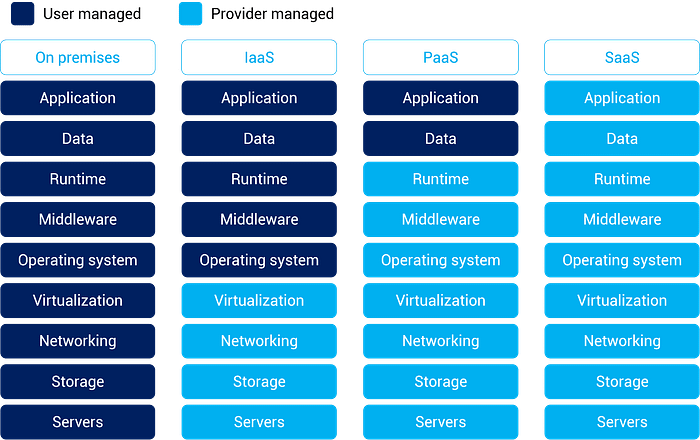

As of right now, we are not using containers (that means no container orchestration too) for building our app. Our app is also following the monolithic approach, so no microservices and breaking up our app into several modules or services. To top it off, right now we are deploying our API to Heroku. If you don’t know, Heroku is a Platform as a Service (PaaS) where we only manage our application and data. All regarding OS, servers, networking, etc. for our app is configured by Heroku itself. Therefore right now we chose to not use containers for our app.

Another reason we did not use containers is also that our app is a mobile app. For all I know, mobile apps don’t need to be containerized to be able to run efficiently. All you need is the APK itself, install it, then you can immediately use the app. Therefore, containers are not needed right now.

Last Thoughts

From the explanation above, if the project you are developing follows the microservice architecture, it is beneficial to use containers since you would want high modularity for you project and containers offer a great solution for it. For projects that follow the monolithic architecture, containers are not essential but you can still use it for you applications whenever you want to change environments or other benefits. Generally, using containers provides many benefits and it is recommended to use it whenever you can since for the long run, it definitely help your project easier to develop and deploy, especially for large-scale projects.

Sources: